Create and execute test cases for SOAP service rules

Summary

SOAP services start processing in response to a request from an external application.

What happens if the external application that makes the requests is being built and tested at the same time that you are creating your application?

You can verify that the service will process data appropriately by using Automated Unit Testing and manually providing some representative data to process.

Suggested Approach

You can unit test a SOAP service rule on its own before testing it in the context of the entire application you are building. With Automated Unit Testing, you can save the test data that you use as test case rules. Then, the next time you test that rule, you can run the test case rather than manually re-entering the test data.

Creating SOAP service test cases

- Open the Service SOAP rule that you want to test.

- Click the Run toolbar button. The Simulate SOAP Service Execution window opens.

- Go to the Test Cases tab of the opened rule.

- Click Record New Test Case. The Simulate SOAP Service Execution window opens.

The rest of the steps for creating a test case are the same for V6.1.

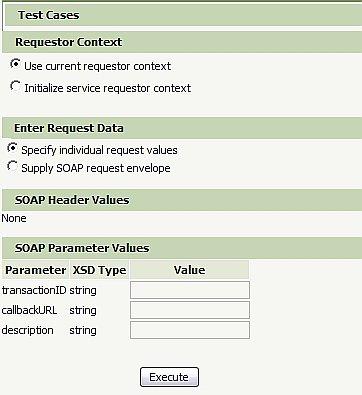

- In the Requestor Context section, you can choose to use the current requestor or to initialize a service requestor.

By selecting the current requestor, the service runs as you, in your own session, with your access rights and RuleSet list. By selecting to initialize a service requestor, Process Commander creates a new requestor and runs as that requestor, with the access rights and RuleSet list specified in the access group for its service package.

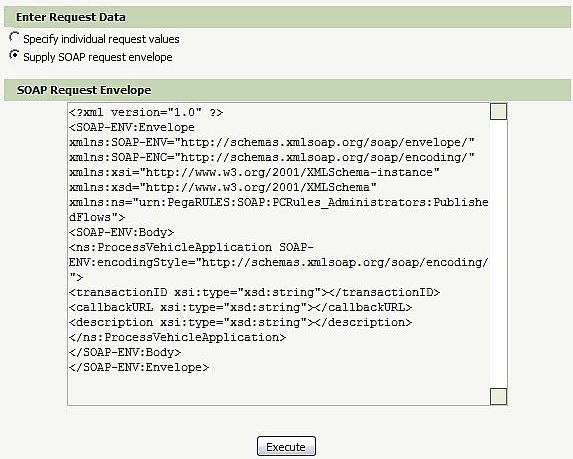

- In the Enter Request Data section, select whether to specify individual request values or to supply a SOAP request envelope.

If you choose to specify individual request values, you will be required to input the values for the SOAP parameters in the SOAP Parameters Values section. If you choose to supply a SOAP request envelope, the SOAP Request Envelope section displays in the window, and you can edit the request envelope.

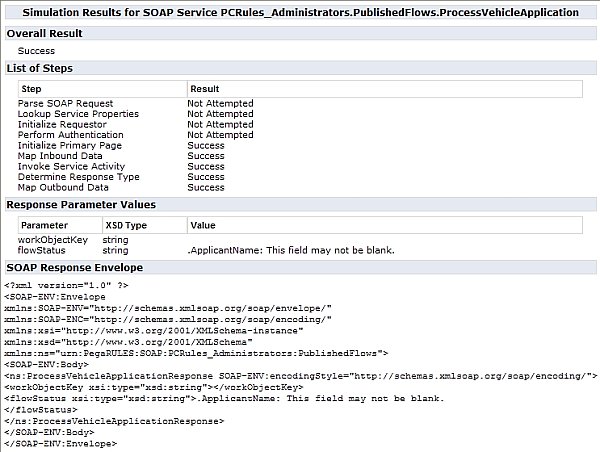

- Once you selected the appropriate values for the SOAP Service rule, click Execute to test it. The Service Simulation Results window opens.

The Service Simulation Results window displays the overall results as well the list of steps taken, Response Parameter values, and SOAP Response Envelope values.

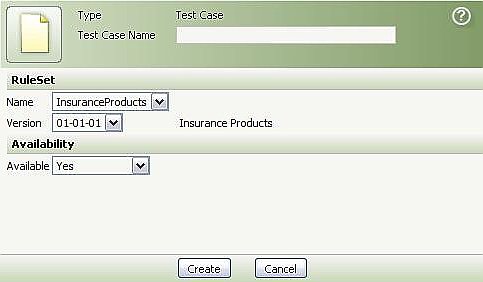

- When you are satisfied with the results, click Save Test Case . The New Test Case dialog box opens.

- In the Test Case Name field, enter a short string that describes the test case.

- Specify the RuleSet you created for test cases and click Create.

For more information on SOAP Service rules, see Testing Services and Connectors.

Running SOAP Service Test Cases

After you create a test case for a rule, it will appear in the list for Saved Test Cases in the Simulate SOAP Service Execution window for the tested rule.

To run a test case for a Service SOAP rule in V6.1:

- Open the rule that you want to test.

- Go to the Test Cases tab of the opened rule.

- Click the name of the test case.

The Simulate SOAP Service Execution window opens, the system runs the test case, and displays the results.

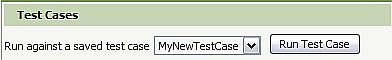

To run a test case:

- Open the rule you want to test.

- Click the Run toolbar icon. The Simulate SOAP Service Execution window opens.

- Select the test case you want to run from the list.

Because the test case rule contains the initial pages that were created, loaded, or copied before the rule was run, you do not have to recreate the initial conditions before running the test case.

- Click Run Test Case . Process Commander runs the test case and displays the results in the Service Simulation Results window. If there are any differences found between the current results and the saved test case, they are displayed in the Simulate SOAP Service Execution window, along with available actions.

Save results: Click Save Results to save the results to the test case for reviewing later.

Overwrite the saved test case: If the new results are valid, you can click Overwrite Test Case to overwrite the test case and use the new information.

Ignore differences: You can choose to ignore particular differences by selecting them and then clicking Save Ignores. Instead of having a property flagged as a difference every time the test case runs, you can choose to have it ignored in future runs of this test case.

Starting with Version 6.1 SP2: You can choose to ignore differences for all test cases in the application. You can also select a page to ignore all differences found on that page. You can ignore a page only for this specific test case (not across all test cases). If you select to ignore a page, all differences found on that page are ignored each time this test case runs.